The difference between bandwidth and latency is something that confuses a lot of people, but if you are an IT professional it would be useful to know the difference between the two because sooner or later you will face a network problem related to it. Part of the confusion has been created by Internet providers by always recommending increase of bandwidth to every Internet speed related problem, but as we will see, an Internet connection speed is not always dictated by bandwidth.

Table of Contents

What is Bandwidth?

To understand bandwidth and latency we need to have a clear definition of both, so let’s start with bandwidth. Bandwidth is the amount of data that can be transferred from one point to another normally measured in seconds. Internet providers normally advertise their Internet bandwidth like this 20/20 Mbps which means that 20 megabits/s of data can be uploaded or downloaded from YouTube in a second for example. Most people are only familiar with bandwidth measuring terms like Megabytes, Gigabytes, etc., but Internet providers still use the Megabit metric because it makes the numbers look bigger but in reality a 20/20 Mbps connection is only about 2 megabytes. The important thing to remember about bandwidth is that bandwidth is not speed. hence the confusion!

What is Latency?

Latency is the time that a data packet takes to travel from one point to another. Another accurate term for Latency is delay. One important thing to remember about latency is that it is a natural phenomenon postulated by Einstein in the theory of relativity. In our universe, everything needs time to travel, even light. So on the Internet, the time it takes a packet to travel ( from Facebook’s data center to your computer for example ) it’s called Latency.

What’s the difference between bandwidth and latency?

By reading the definition of both terms above you probably already spot the difference between the two, but I’ll give you an analogy to make it easier to understand it if you are still confused. Imagine a highway with 4 lanes where the speed limit is 60 mph. Now on the Internet, bandwidth is the highway, and latency is the 60 mph speed limit. Now if you want to increase the amount of cars that travels through the highway you can add more lanes, but because the highway has too many curves, and bumps, you can’t increase the speed limit so all cars have to travel at 60 mph still. It doesn’t matter how many lanes the highway has, the cars will get to their destination at the same time regardless of the size of the highway!

Why increasing bandwidth increases download speed then you might ask, isn’t that speed? No, by increasing bandwidth you increase capacity not speed. Following the highway analogy, imagine that vehicles traveling through that highway were all trucks with house bricks for delivery. All trucks have to travel at 60 mph, but once they arrive at their destination instead of delivering 4 loads of bricks, 6 loads are delivered because 2 more lanes were added to the highway. The same thing happens when you add bandwidth to an Internet connection, the capacity is increased but the latency (speed ) stays the same.

What causes latency?

Latency is caused by the distance and the quality of the medium that the Internet packets travel through. For example, the latency through a fiber optic connection is shorter than through a copper wire cable, but latency through a copper wire cable is shorter than through a satellite connection, etc. Satellites use the microwave spectrum to relay data connections from space. What increases the latency e.g. lag in satellite connections is the distance that packets have to travel back and forth.

When is latency a problem?

Most people don’t notice and don’t care about latency as long as web pages, Netflix, YouTube, and other multimedia stuff load fast. Latency becomes a problem only when real time data transfer is necessary. For example, VOIP calls, online face to face meetings, etc. I have calculated that any latency beyond 200ms will give you problems in real time communication. for example in a Skype or VOIP call, you will experience a noticeable delay, which will make it almost impossible to have a fluid conversation without interruptions.

How can I test latency?

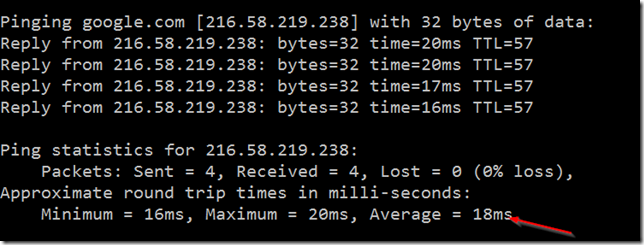

The quickest way to test latency from any computer is using the ICMP protocol with the Ping command. for example, if I want to test the latency between my computer and Google’s data center, I will type in the command ping google.com:

As you can see the average latency between my computer and Google’s data center is 18ms.

Conclusion

I hope your understanding between bandwidth and latency is clearer now. If you have any question, suggestion or comment, please use the comment box below.

Was this article helpful?

Your feedback helps us improve our content.

163 people found this helpful!